|

Dataflows that load data to an Azure Data Lake Storage account store data in Common Data Model folders. App makers can then use Power Apps and Power Automate to build rich applications that use this data.Īzure Data Lake Storage lets you collaborate with people in your organization using Power BI, Azure Data, and AI services, or using custom-built Line of Business Applications that read data from the lake. Dataverse includes a base set of standard tables that cover typical scenarios, but you can also create custom tables specific to your organization and populate them with data by using dataflows.

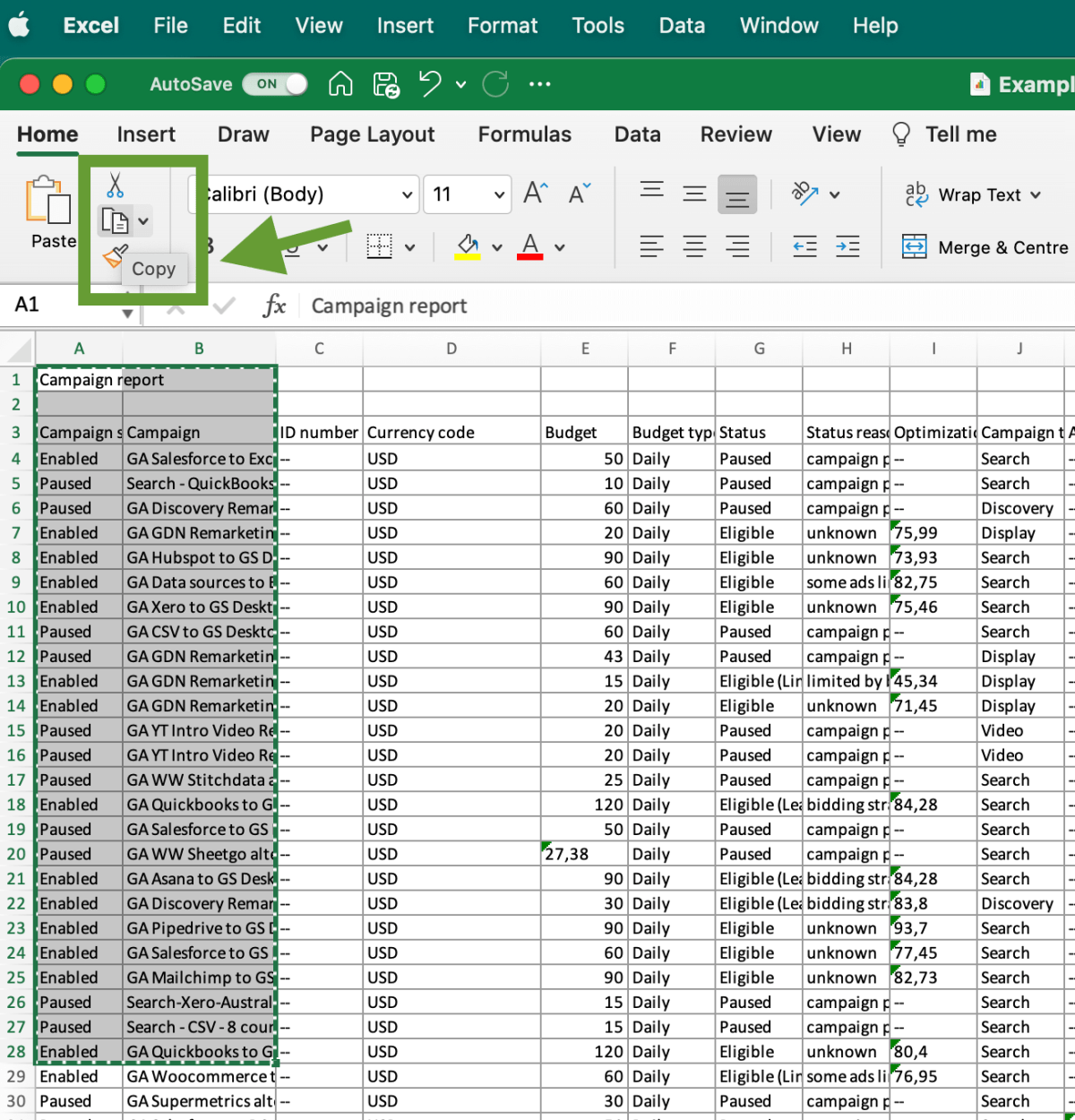

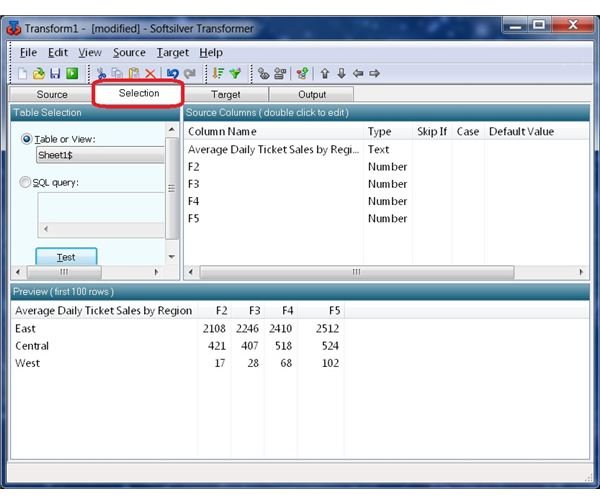

Each column in the table is designed to store a certain type of data, for example, name, age, salary, and so on. A table is a set of rows (formerly referred to as records) and columns (formerly referred to as fields/attributes). Data within Dataverse is stored in a set of tables. Load data to Dataverse or Azure Data Lake Storage: Depending on your use case, you can store data prepared by Power Platform dataflows in the Dataverse or your organization's Azure Data Lake Storage account:ĭataverse lets you securely store and manage data that's used by business applications. Dataflows are created and easily managed in app workspaces or environments, in Power BI or Power Apps, respectively, enjoying all the capabilities these services have to offer, such as permission management and scheduled refreshes. You can create dataflows by using the well-known, self-service data preparation experience of Power Query. This engine takes care of all the transformation and dependency logic-cutting time, cost, and expertise to a fraction of what's traditionally been required for those tasks. Business analysts, BI professionals, and data scientists can use dataflows to handle the most complex data preparation challenges and build on each other's work, thanks to a revolutionary model-driven calculation engine. With dataflows, ETL logic is elevated to a first-class artifact within Microsoft Power Platform services, and includes dedicated authoring and management experiences. Previously, extract, transform, load (ETL) logic could only be included within semantic models in Power BI, copied over and over between semantic models, and bound to semantic model management settings. Self-service data prep for big data with dataflows: Dataflows can be used to easily ingest, cleanse, transform, integrate, enrich, and schematize data from a large and ever-growing array of transactional and observational sources, encompassing all data preparation logic. With dataflows, Microsoft brings the self-service data preparation capabilities of Power Query into the Power BI and Power Apps online services, and expands existing capabilities in the following ways: To make data preparation easier and to help you get more value out of your data, Power Query and Power Platform dataflows were created.

Such projects can require wrangling fragmented and incomplete data, complex system integration, data with structural inconsistency, and a high skill set barrier.

It can consume as much as 60 to 80 percent of the time and cost for a typical analytics project. In the world of ever-expanding data, data preparation can be difficult and expensive. Using dataflows with Microsoft Power Platform makes data preparation easier, and lets you reuse your data preparation work in subsequent reports, apps, and models.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed